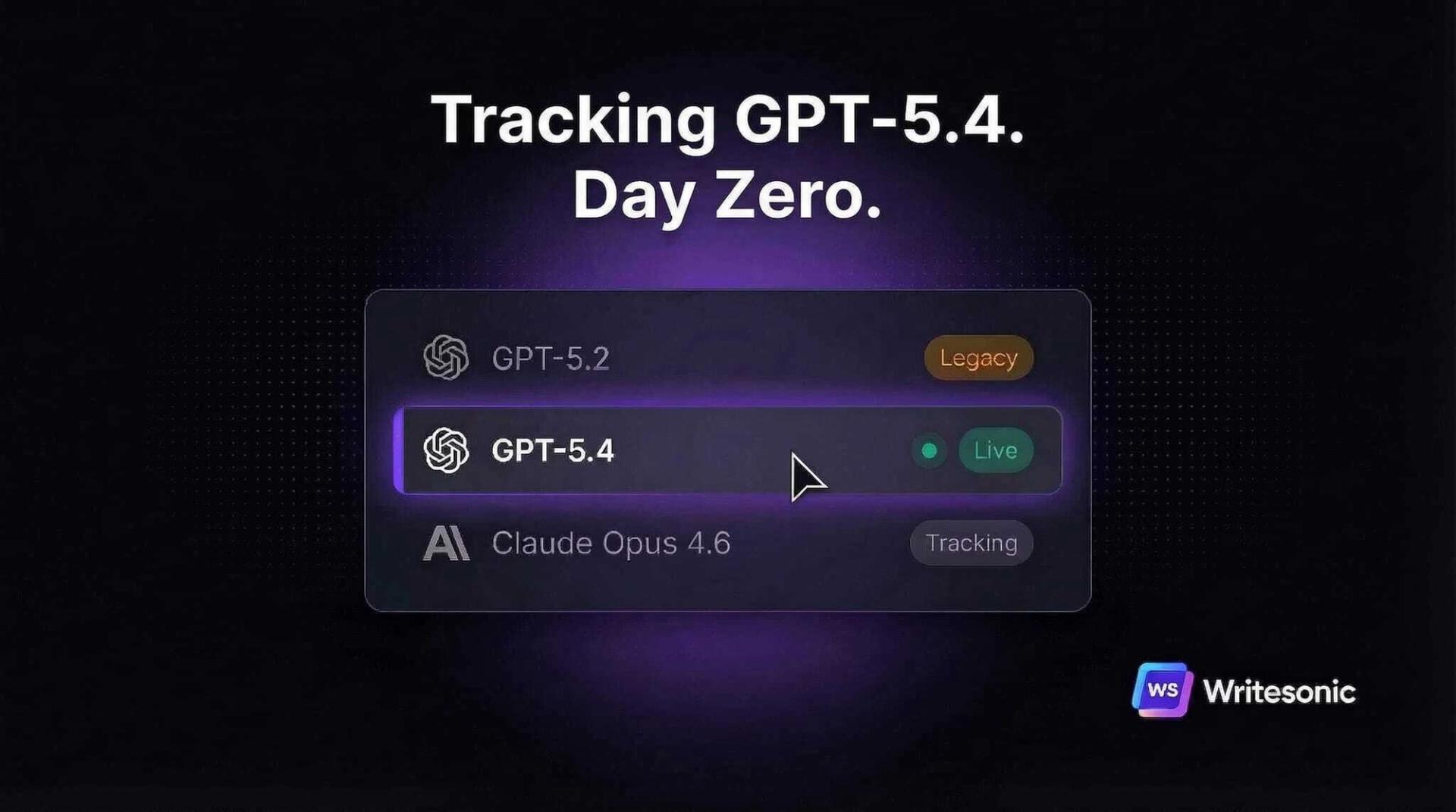

On March 5, OpenAI replaced GPT-5.2 with GPT-5.4 as the default model in ChatGPT. Not a quiet upgrade. A full swap.

GPT-5.2 Thinking is already legacy. It retires completely on June 5, 2026.

We track how AI models describe brands across 500M+ conversations. Every time a model changes, we watch citation share, sentiment, and visibility scores shift in real time. Sometimes gradually. Sometimes overnight.

GPT-5.4 is an overnight one.

Here’s what I’m seeing in our data so far, what it means for your AI visibility, and what you should do about it this week.

ChatGPT’s web search just got more aggressive

Let’s be clear: ChatGPT already searched the web before GPT-5.4. This isn’t a new capability.

What changed is how it searches. On BrowseComp (a benchmark for how persistently AI searches for hard-to-find info), GPT-5.4 scored 82.7%. GPT-5.2 scored 65.8%. That’s a 17-point jump.

We ran a side-by-side test on the same prompt across both models. The results were striking.

GPT-5.2 with thinking ran 8 search queries. All generic discovery searches. Broad keyword phrases like “AI visibility tool track brand mentions in ChatGPT Perplexity Gemini.”

It let Google decide which domains to show. Classic “what’s out there?” behavior.

GPT-5.4 with thinking ran 19 queries. 2.4x more. And the strategy was completely different.

Instead of generic searches, GPT-5.4 went directly to individual vendor websites using site-scoped queries. It pulled up pricing pages, feature pages, and official docs from each brand’s own domain. One by one. Like a researcher checking primary sources before writing a report.

GPT-5.2 asked Google: “What tools exist?” GPT-5.4 said: “Let me verify each brand on its own website.”

That’s a fundamental shift in how ChatGPT gathers information about your category. And it changes the AI search visibility playbook.

In GPT-5.2, ranking on Google for category keywords mattered most. If a roundup article mentioned you, that was enough.

GPT-5.4 goes past the roundup. It visits your actual site. It reads your pricing page, your feature page, your comparison page. If that content is clear and structured, you get cited. If it’s thin, outdated, or buried behind JavaScript, GPT-5.4 moves on to the next brand.

We’re running more tests across different industries and prompt types and will publish the full research in the coming days. But the early data is clear: on-site content optimization just became the highest-leverage GEO strategy.

What does this mean for your dashboard? Expect your visibility scores to shift. Citation share across competitive prompts will likely reshuffle.

Brands with deep, accurate product content on their own domains should gain. Brands relying on third-party mentions alone could lose ground.

33% fewer false claims sounds great. Until it isn’t.

GPT-5.4 produces 33% fewer false claims per response and 18% fewer error-containing responses compared to GPT-5.2. On GDPval (testing professional knowledge work across 44 occupations), it scores 83%, up from 70.9%.

Good news if your public content is accurate. A more factual model represents you more faithfully.

Bad news if it isn’t.

GPT-5.2 might have glossed over a documented product limitation. GPT-5.4 won’t. A legitimate criticism on a review site? It’ll surface.

A pricing page that hasn’t been updated in six months? The model won’t fill the gap with a generous assumption.

This directly affects your sentiment scores. We expect sentiment tracking to get more honest across the board. Not because the model has opinions. Because it’s better at finding accurate information, including accurate negatives.

Your brand’s information hygiene is now a direct input to how AI talks about you. Outdated content is a visibility drag.

GPT-5.4 operates software. Not just searches.

Recent ChatGPT models could already search the web. GPT-5.4 can interact with websites the way a human does. Click through pages. Read screenshots. Navigate UIs.

On OSWorld-Verified (testing real desktop tasks), GPT-5.4 scored 75%. GPT-5.2 scored 47.3%.

For context, the human benchmark is 72.4%. GPT-5.4 surpassed it.

Today, this matters more for developers building AI agents through the API than for everyday ChatGPT conversations. But the direction is clear. AI is moving from “search and summarize” to “browse, evaluate, and act.”

For e-commerce brands, this connects to something bigger. ChatGPT is rolling out shopping features. Perplexity has shopping. Google AI shows buy buttons.

When someone asks “best running shoes under $150,” the real question is: does the buy button point to you or Amazon?

We’ve been building shopping visibility tracking for exactly this shift. As AI agents get better at browsing product pages and comparing prices in real time, your product feed becomes an AI visibility input. Treat it like one.

What to do this week

Every day you wait is a day competitors are adjusting and you’re not.

What your team can do right now

Start by running your core brand queries through ChatGPT today. Not next week. Today. Compare the responses to what you got before March 5.

Look for shifts in competitive positioning, which features get highlighted, and whether the tone changed. We’ve already seen early GPT-5.4 responses that reorganize competitive rankings for identical prompts.

Then audit your public content for accuracy. Check for outdated pricing, deprecated features still listed, case study numbers that need refreshing. GPT-5.4 is better at catching inconsistencies. It won’t give you the benefit of the doubt.

What you can’t do manually at scale

Running five queries by hand gives you a snapshot. But your brand shows up across hundreds of prompts, on multiple AI platforms, every day. You can’t track citation share shifts, sentiment changes, and competitive repositioning across all of them in a spreadsheet.

That’s what Writesonic automates. We track your visibility scores, citation sources, and sentiment across ChatGPT, Claude, Perplexity, and 10+ AI platforms daily. When a model transition like GPT-5.4 hits, you see exactly what shifted, which competitors gained or lost ground, and which of your pages are getting cited or ignored.

Then our Action Center tells you what to fix first, ranked by impact. Missing from a high-traffic prompt? We flag it. Competitor getting cited on a page you’re absent from? We show you the opportunity.

Content outdated? We surface the refresh.

Book a demo with our team to see how GPT-5.4 is describing your brand today and get a plan to improve it.

Every model transition is a visibility event

This is the third major ChatGPT model transition in the past year. After each one, we’ve watched brands gain and lose AI visibility overnight in our tracking data. Most marketing teams don’t notice for weeks.

GPT-5.4 is a bigger transition than the last two. More accurate. Deeper web search with site-scoped verification. Citations tracing back to source pages.

And native computer use on top of it.

The brands that treat model launches as visibility events are building a structural advantage. The ones that find out what changed three months later are playing catch-up on a game that already moved.

That gap compounds with every transition.

Writesonic already tracks GPT-5.4 across ChatGPT. See how the model transition shifted your visibility, citation share, and sentiment. Book a demo to get your brand’s GPT-5.4 visibility report.

Founder @ Writesonic

Samanyou is the founder of Writesonic, a platform that helps you track & boost your brand’s visibility in AI search. Two years before the launch of ChatGPT, Writesonic was already at the forefront, helping organizations automate their entire marketing workflow through specialized AI agents for SEO and content. Samanyou is a Forbes 30 Under 30 awardee and a winner of the 2019 Global Undergraduate Awards, often referred to as the junior Nobel Prize.