We track AI citations across hundreds of brands at Writesonic.

One pattern keeps repeating: pages ranking in Google’s top 3 get passed over by ChatGPT, Perplexity, and Gemini in favor of competitors sitting at position 8 or lower.

Not because the ranking page is worse, because it’s harder for AI to extract from.

A lot of content teams still assume that if a page is good enough to rank, it should be good enough to get picked up by ChatGPT, Perplexity, Gemini, or Google AI Overviews. But that’s not how these systems work. Google ranks pages. AI extracts answers.

And once you see that clearly, a lot of confusing outcomes suddenly make sense.

In this guide, I’m going to break down:

• what citation readiness actually means

• how AI decides what to cite

• the patterns behind pages that consistently earn citations

• a practical 31-point checklist to run before publishing

TL;DR

Ranking on Google and getting cited by AI are not the same game. Google ranks pages. AI extracts answers. And the page that wins the citation is usually not the best-written one, it’s the most extractable one.

Pages with more complete answers earn 8x more AI citations. Meanwhile, 73% of websites have technical barriers blocking AI crawlers without even knowing it. The first 1-2 sentences of your page are what AI pulls for citations, if the opening is a hook or a hot take instead of a clean answer, AI skips you.

Citation-ready content gets seven things right: a clean opening answer, complete topic coverage, visible authorship, crawler accessibility, extraction-friendly structure, distribution beyond your own site, and iteration after publish. Most teams nail the first three and ignore the rest.

This guide breaks down each area with examples, then gives you a 31-point checklist to score your content before every publish.

What is citation readiness?

Citation readiness is the practice of structuring content so AI models can extract, understand, and reference it in generated answers.

That includes systems like ChatGPT, Perplexity, Gemini, and Google AI Overviews.

This is the shift. Traditional SEO asks whether a page can rank. Citation readiness asks whether a page can be lifted, trusted, and attributed inside an AI-generated answer. Those are related goals, but they’re not identical.

A citation-ready page usually gets seven things right:

1. It opens with a clean answer

2. It covers the topic completely

3. It makes authorship and expertise obvious

4. It’s technically accessible to AI crawlers

5. It’s structured for extraction

6. It gets distribution beyond your own site

7. It gets updated after publishing based on real-world performance

Most teams are still focused on the first half of that list. AI visibility depends on the full set.

Why does content rank on Google but stay invisible to AI?

Because Google and AI systems are doing different jobs.

Google’s job is to return a ranked list of pages. It weighs authority, backlinks, page speed, relevance, freshness, and hundreds of other signals to decide where a page should appear.

AI systems work differently. They generate an answer, then decide which sources are trustworthy and easy enough to extract from.

That’s why a page ranking #3 can lose the citation to a page ranking #8.

It sounds unfair until you realize AI is not rewarding the “best” page in the traditional SEO sense. It’s looking for the page that gives it the clearest usable answer with the least friction.

Here’s the truth: a lot of teams are still optimizing for discoverability alone. They also need to optimize for extractability.

How does AI decide what to cite?

Here’s what I’m seeing in our data at Writesonic: the pages that consistently win citations aren’t the ones with the highest domain authority. They’re the ones where the first 200 words read like a clean, self-contained answer. That’s the extraction test, and most content fails it.

AI models usually cite the most extractable page from a pool of credible sources.

Not always the highest-ranking page. Not always the longest page. Not even always the most insightful page.

The winning page is often the one that makes the model’s job easiest.

Otterly’s 2026 citation research helps explain this. Their findings showed that pages with more complete answers earned 8x more citations. Not pages with more words. Not pages with more personality. Pages with more complete answers.

Profound’s research points in the same direction. It found that models build what it calls source stacks, then select the source that feels easiest to trust and quote from.

That means a page is more likely to be cited when it has:

• a direct definition near the top

• clear topical coverage

• visible expertise signals

• strong external sourcing

• clean structure

• accessible technical foundations

Think about it this way. Once you’re in the pool of credible sources, quality alone is not enough. The page also has to be easy for AI to use.

What makes content citable?

After looking across the original Medium draft, citation studies, and audit patterns from tools like Otterly and Profound, the same ideas come up again and again.

Pages that earn citations tend to be strong in the same seven areas.

Does your opening sentence pass the extraction test?

This is one of the biggest reasons good content gets skipped.

AI often pulls from the top of the page first. If your opening gives a clean, factual answer, you have a much better shot at being cited. If it starts with commentary, a joke, a long scene-setter, or a provocative hook, the model may move on before it reaches the useful part.

For example:

Weak opening:

“SEO is dead, according to every AI search expert that showed up last week.”

Better opening:

“Generative Engine Optimization, or GEO, is the process of optimizing content so it gets cited in AI-generated answers.”

The second one is plainer. That’s exactly why it works.

A lot of writers are trained to make the first line clever. For human readers, that can help. For AI extraction, it often hurts.

If the first 1 to 2 sentences don’t answer the question clearly, you’re making citation less likely from the start.

Is your content complete enough, or just detailed?

A lot of content goes deep without actually being complete.

That’s a problem, because AI prefers the most complete answer, not the most detailed answer.

If your post covers 6 of the 10 subtopics people expect around a topic, it may lose to a competitor that covers 9 of them, even if your writing is sharper. That’s what Otterly’s citation research points to. The pages that win citations are often not the longest. They’re the ones that cover the full question space better.

Before publishing, it helps to do a simple gap check:

• review competing H2s

• scan common follow-up questions

• look for subtopics your draft skips

• ask whether the page actually resolves the search intent end-to-end

This matters because AI systems don’t just look for a smart paragraph. They look for confidence that the page can answer the full question well enough to cite.

Does AI know who is speaking, and why they’re credible?

AI systems care about entities. They want to know:

• who wrote this

• what authority they have

• what brand they’re associated with

• whether the source sounds trustworthy

This is why named authorship matters. “Team Brand” is vague. A real person with visible expertise is stronger.

For example:

• weak: Team Writesonic

• stronger: Drishti Chawla, Growth Marketer at Writesonic

Profound’s research adds another useful point here. It suggests AI models respond better to what it calls semiotic cues of helpfulness. In practice, that means content tends to feel more trustworthy when it:

• acknowledges tradeoffs

• includes nuance

• avoids one-sided hype

• cites external sources

• sounds like it comes from someone who has actually done the work

This is one reason generic brand copy often performs poorly in AI search. It may sound polished, but not necessarily trustworthy.

And trust is what gets you cited.

Can AI crawlers actually access your page?

This is the boring part that breaks everything.

According to Otterly, 73% of websites have technical barriers blocking AI crawlers. That means many teams are trying to improve copy when the real issue is that the page is hard for AI systems to access in the first place.

When we onboard new brands into Writesonic, the first thing we check is crawler access. In roughly 7 out of 10 cases, at least one major AI crawler is blocked, and the team had no idea. This is the most common silent killer of AI visibility.

The basics matter:

• does robots.txt allow GPTBot, ClaudeBot, PerplexityBot, Google-Extended, and related crawlers?

• does the page load its core content without heavy JavaScript dependency?

• is article schema present with author and publishing dates?

• is FAQ schema implemented where relevant?

• is the meta description factual and useful?

If the front door is locked, the quality of the content inside doesn’t matter.

This is also where manual work starts to fall apart at scale. Once you’re tracking AI visibility across multiple platforms, prompts, and pages, you need a way to see which content is being cited, which pages are being ignored, and where the technical gaps are.

Is your content structured for extraction, not just reading?

This is where citation readiness becomes practical.

A lot of content is written to read smoothly from top to bottom. But AI doesn’t experience content the way a human does. It extracts blocks.

That means headings, short paragraphs, lists, definitions, tables, and direct-answer sections matter a lot.

Four structure rules make a real difference:

1. Start sections with the answer

Lead with the point. Then explain it.

2. Use question-based headings

Headings that match real user prompts are easier for AI systems to map to common queries.

3. Keep paragraphs short

Two to four sentences is usually enough. Long walls of text reduce clarity.

4. Stay consistent with terminology

If you mean GEO, say GEO consistently. Don’t keep switching labels unless you’re intentionally comparing concepts.

Good structure does not make a piece feel robotic. It makes it easier to use.

And in AI search, usability wins citations.

Does publishing alone make content citable?

Short Answer: No.

Distribution is where most teams stall. They hit publish and move on. The brands we see winning citations treat publish day as day one of a 30-day push, LinkedIn posts, Reddit threads, expert quotes. It’s not optional anymore.

AI systems often cite content that has broader validation across the web. That can include:

• Reddit discussions

• LinkedIn posts

• YouTube mentions

• backlinks

• roundups

• expert references

A page with zero external conversation around it is at a disadvantage.

There’s another reason distribution matters: it forces sharper thinking. If your best idea can’t survive as a short LinkedIn post, a quote, or a one-line takeaway, it may not be strong enough yet.

That’s why quotable framing matters so much.

A line like this:

73% of websites have technical barriers blocking AI crawlers.

is easy to reuse, repeat, and cite.

A good insight buried in paragraph eight usually isn’t.

Why does monitoring after publishing matter so much?

Because citation performance is not static.

AI systems change. Competitors update their pages. Query behavior shifts. What gets cited today may stop getting cited next month.

That’s why publishing should be treated as the start of the feedback loop, not the end.

A practical monitoring process looks like this:

In the first 2 weeks

Test the target query in:

• ChatGPT

• Perplexity

• Gemini

• Google AI Overviews

Check:

• whether you’re cited

• which section gets pulled

• who gets cited instead

In weeks 2 to 4

Review whether AI crawlers are reaching the page and whether referral patterns are changing.

Within 30 days

If the page is still not getting cited, revise it:

• improve the opening

• add missing subtopics

• include stronger data

• clarify authorship

• tighten structure

This is where teams that iterate consistently start to pull ahead. They don’t just publish. They adjust based on what AI systems are actually doing.

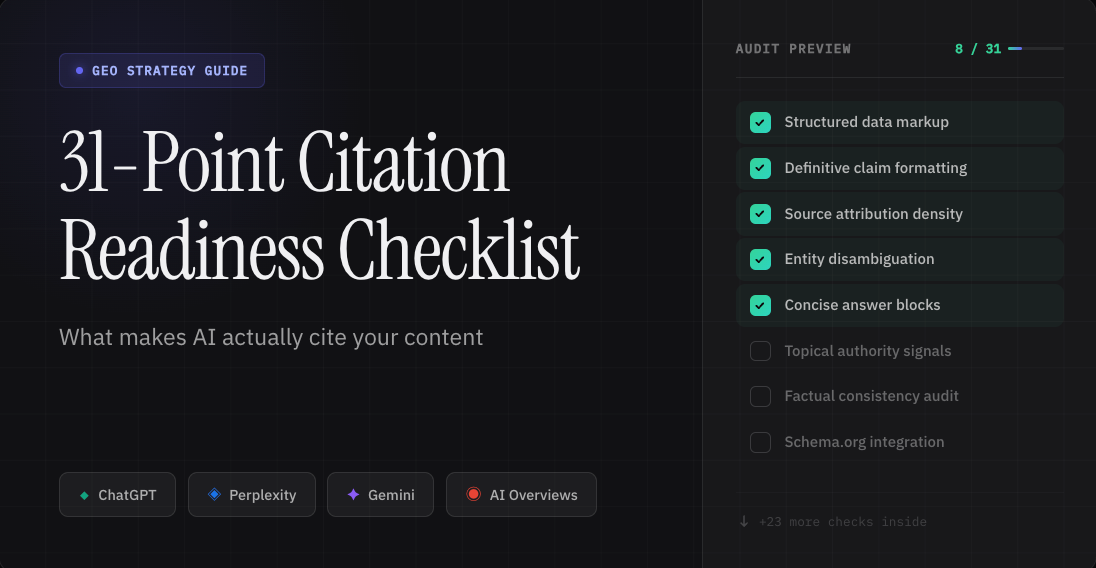

The 31-point citation readiness checklist

Use this before every publish.

Section 1: Opening and structure

1. The first 1 to 2 sentences give a clean, factual definition or direct answer

2. The first 200 words work as a standalone answer

3. A clear table of contents is present with descriptive anchor links

4. A TL;DR or Key Takeaways block appears near the top

5. The H1 includes the exact query or phrase you want to be cited for

Section 2: Content depth and completeness

6. The article covers every major subtopic the reader expects

7. Each section answers one specific question clearly

8. Key terms are defined clearly

9. Specific stats or data points support the main claims

10. At least one comparison table or framework is included

11. Real examples, case studies, or use cases are included

Section 3: Entity clarity and authority signals

12. The author name and credentials are visible

13. External sources are cited with links

14. Brand positioning is clear and consistent

15. The article includes original insight or a clear point of view

16. It acknowledges nuance, tradeoffs, or limitations

Section 4: Technical SEO for AI crawlers

17. FAQ schema is implemented with concise answers

18. Article schema includes author, datePublished, and dateModified

19. robots.txt allows major AI crawlers

20. Core content loads without JavaScript dependency

21. An llms.txt file exists at the domain root

22. The meta description is factual and concise

Section 5: Formatting for AI extraction

23. H2s and H3s match real user questions or exact query phrasing

24. Each section starts with a direct answer before explanation

25. Paragraphs are short and easy to extract

26. Bullet points and numbered lists are used where useful

27. Tables are used for comparisons and structured information

28. Terminology stays consistent throughout the article

Section 6: Distribution and authority building

29. The post is shared across LinkedIn, X, and relevant communities

30. At least one external backlink, quote, or mention is secured

31. Key findings are phrased as quotable, one-sentence takeaways

What you can do right now

• Rewrite the first 1–2 sentences of your top 5 pages to be clean, factual definitions

• Check robots.txt for GPTBot, ClaudeBot, and PerplexityBot access

• Add FAQ schema to your top-traffic pages

• Convert vague headings (“Understanding the Landscape”) to question-based H2s (“What is GEO?”)

• Add a named author with credentials to every blog post

• Extract your best insight from each post into a standalone, quotable one-liner

• Share each post on LinkedIn and in one relevant community within 48 hours of publish

What needs a monitoring tool

• Track which prompts your brand appears in across ChatGPT, Perplexity, Gemini, and AI Overviews

• Monitor AI crawler activity at scale (which pages get crawled, which get skipped)

• Identify citation gaps — prompts where competitors are cited and you’re not

• Get prioritized action items ranked by effort and impact

• Benchmark your AI visibility against competitors over time

See where your content stands across ChatGPT, Perplexity, Gemini, and AI Overviews, and get a prioritized list of what to fix. Book a demo.

How should you score the checklist?

Use this grading model before publishing:

| Score | Meaning | Action |

| 28 to 31 | Strong citation readiness | Publish and monitor |

| 22 to 27 | Good foundation, but gaps remain | Revise before publishing |

| 15 to 21 | Structural issues are likely holding it back | Rework |

| Below 15 | Not ready for AI citation | Restructure before publishing |

This is not about chasing a perfect score. It’s about removing the obvious reasons your page gets skipped.

Final takeaway

AI doesn’t care what your page deserves. It cares whether it can use it.

If the answer is buried, if the structure is messy, if the expertise is vague, or if the page is hard to crawl, you won’t get cited. It doesn’t matter how much effort went into the draft.

The pages winning in AI search are not just well-written. They’re easy to extract, easy to trust, and easy to attribute.

That’s what citation readiness is.

This is the new baseline for being visible in AI-generated answers.

Run the checklist. Fix the gaps. And if you want to see exactly where your brand is getting cited — and where it’s not — Writesonic shows you. Book a demo.

Growth Marketer

Growth marketer at WriteSonic passionate about scaling content and building high-performing funnels. Ex-MoEngage, Whatfix, Tata AIG, Product Fruits, and TripleDart.